01. Research & Objectives

Part of the NYU VIP program, this project focuses on creating immersive educational labs. Our current objective is to replicate the university MakerSpace in VR, making it accessible through Mixed Reality devices like the Meta Quest 3.

Short-Term Goals

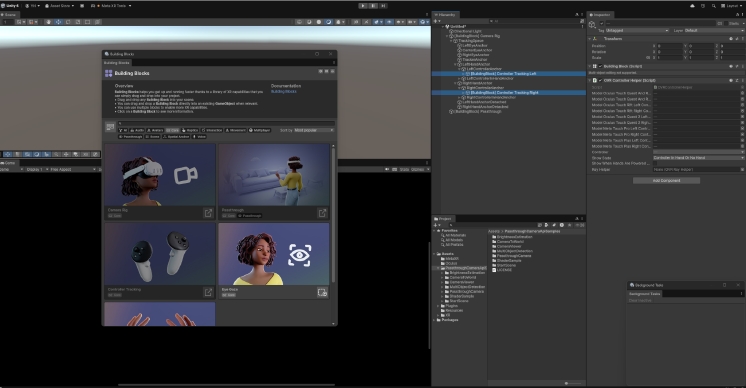

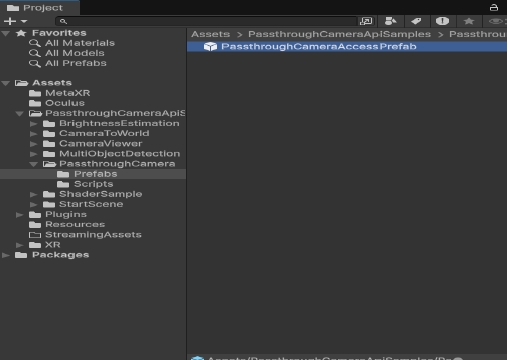

- Develop a virtual MakerSpace environment using Unity Engine.

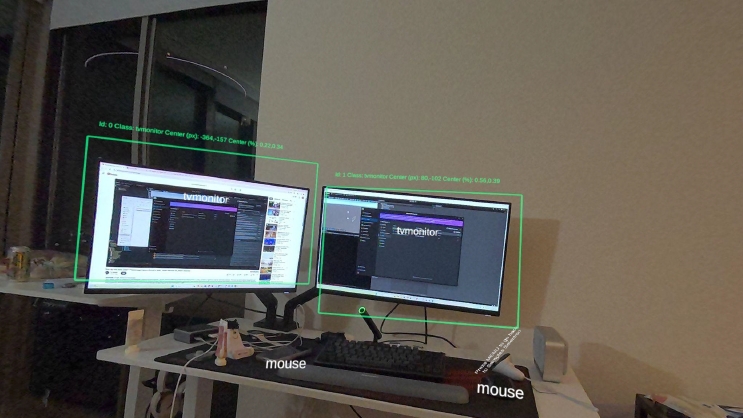

- Integrate sensing technologies to track physical workspace dynamics.

- Implement AI-driven object recognition for machine tutorials.

02. Technical Documentation

As part of the AR Subgroup, I manage the Mixed Reality pipeline. Navigating rapidly evolving SDKs required deep technical troubleshooting and migration strategies.

03. Current Status

This project is in progress. Development is currently focused on stabilizing the core pipeline amidst SDK name changes and compatibility updates.

This experience allowed me to transition my background into C# scripting and Unity's component-based architecture. I am now focused on completing the full implementation in the upcoming term.